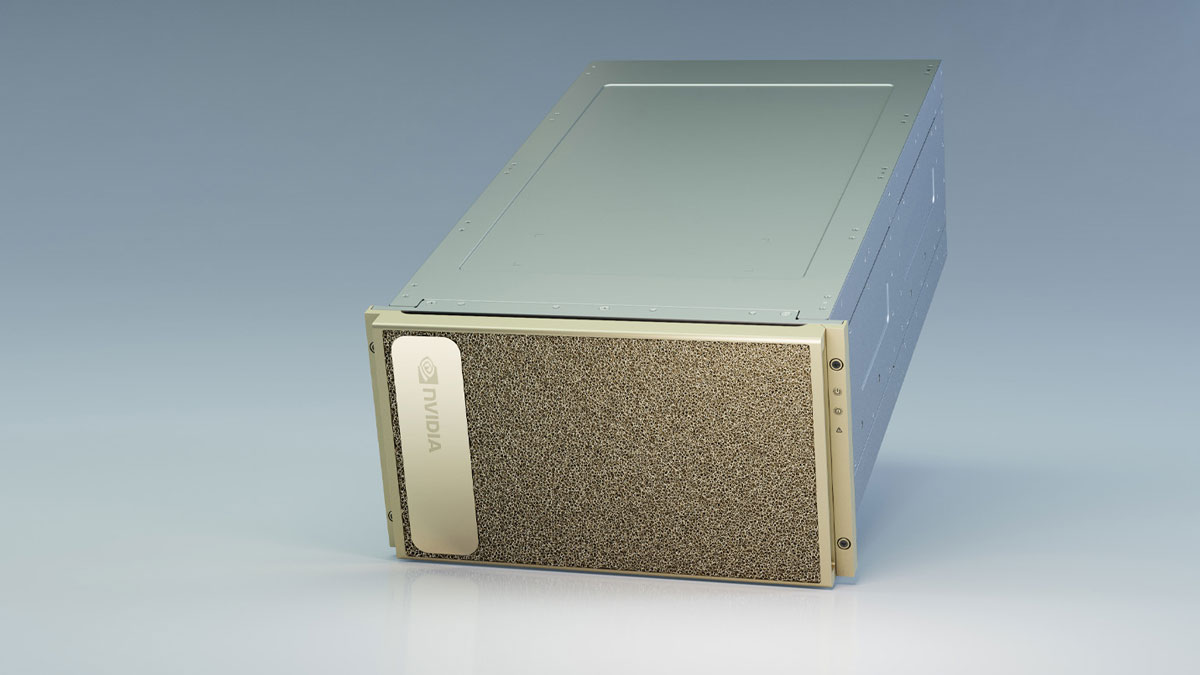

NVIDIA today announced Vietnam’s first deployment of the new NVIDIA DGX A100. system at VinAI, the country’s first research lab. VinAI has big plans for the GPU-accelerated data centre in a box, which offers 5 petaflops of AI computing power and integrates eight NVIDIA A100 Tensor Core GPUs.

The new AI system is a perfect fit for training language, image and video models, as well as other ambitious projects that utilise a large amount of GPU power and high-speed interconnect technology. The research lab aims to advance understanding of the fundamentals in machine learning and deep learning and to investigate how they enable new AI methods in computer vision and natural language understanding.

VinAI will focus on the development of new AI applications, especially those that help enable more natural human interaction with machines through voices, gestures, behaviours, and biometrics, or from smart sensors and devices. From its strategic location in Southeast Asia, VinAI is drawn towards major problems in developing countries that might otherwise be overlooked in the research community.

It has contributed to the global fight against the COVID-19 pandemic by automatically analysing tweets for COVID-19 events and enabling face recognition of people donning face masks. VinAI wanted to expand its NVIDIA DGX-1 cluster to improve its scale and performance, especially for training large Vietnamese language models. The NVIDIA DGX A100 is the ideal solution for powering today’s most challenging AI workloads, from training to inference to data analytics.

“Our lab’s utilisation is always maxed out at 100 percent so the new DGX A100 system will give our team the compute power to tackle our most complex problems,” said Dr. Bui Hai Hung, Director, VinAI.

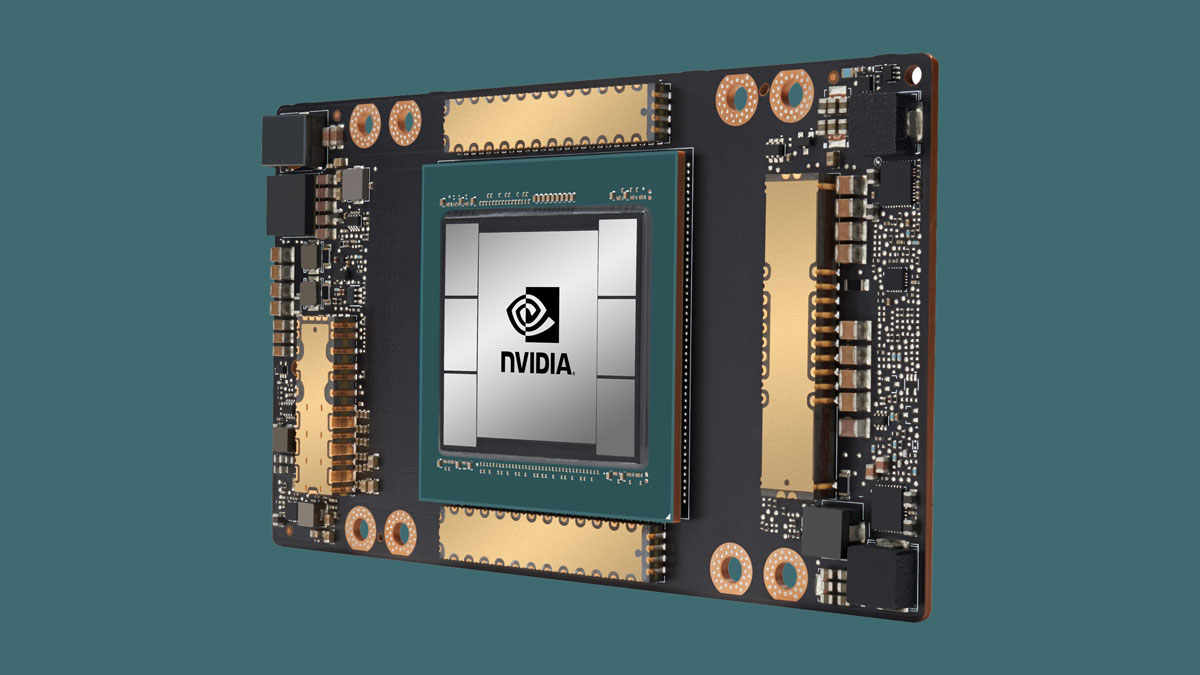

Around 70 research scientists, residents and engineers will be relying on the new AI system, which will be part of VinAI’s NVIDIA DGX cluster. This number is expected to double by the end of this year. Accelerated data centre in a box The NVIDIA DGX A100 is a 5-petaflops accelerated data centre in a box that provides the power and performance needed for AI researchers. Based on the NVIDIA Ampere architecture, it packs eight NVIDIA A100 Tensor Core GPUs to provide 320GB of memory for training large AI datasets, inference and data analytics workloads.

Using multi-instance GPU technology, multiple smaller workloads can be supported by partitioning the DGX A100 into as many as 56 instances. In combination with integrated high-speed NVIDIAR MellanoxR HDR networking interconnects, the DGX A100 delivers an elastic infrastructure for research centres.

More information about the DGX A100 is available at https://www.nvidia.com/dgxa100 .