The generative AI landscape is making a significant leap in speed, achieving up to four times faster performance through TensorRT-LLM for Windows: an open-source library engineered to enhance inference speeds for cutting-edge AI large language models like Llama 2 and Code Llama. This development builds upon the recent introduction of TensorRT-LLM for data centers.

NVIDIA has further enriched the toolbox for developers, offering scripts designed to optimize custom models using TensorRT-LLM.

The TensorRT acceleration has now extended its influence to Stable Diffusion, a popular web UI provided by Automatic1111 distribution, enhancing generative AI diffusion models by a factor of two over the previous fastest implementation. Additionally, RTX Video Super Resolution (VSR) version 1.5 is now part of the Game Ready Driver release, with plans to include it in the upcoming NVIDIA Studio Driver next month.

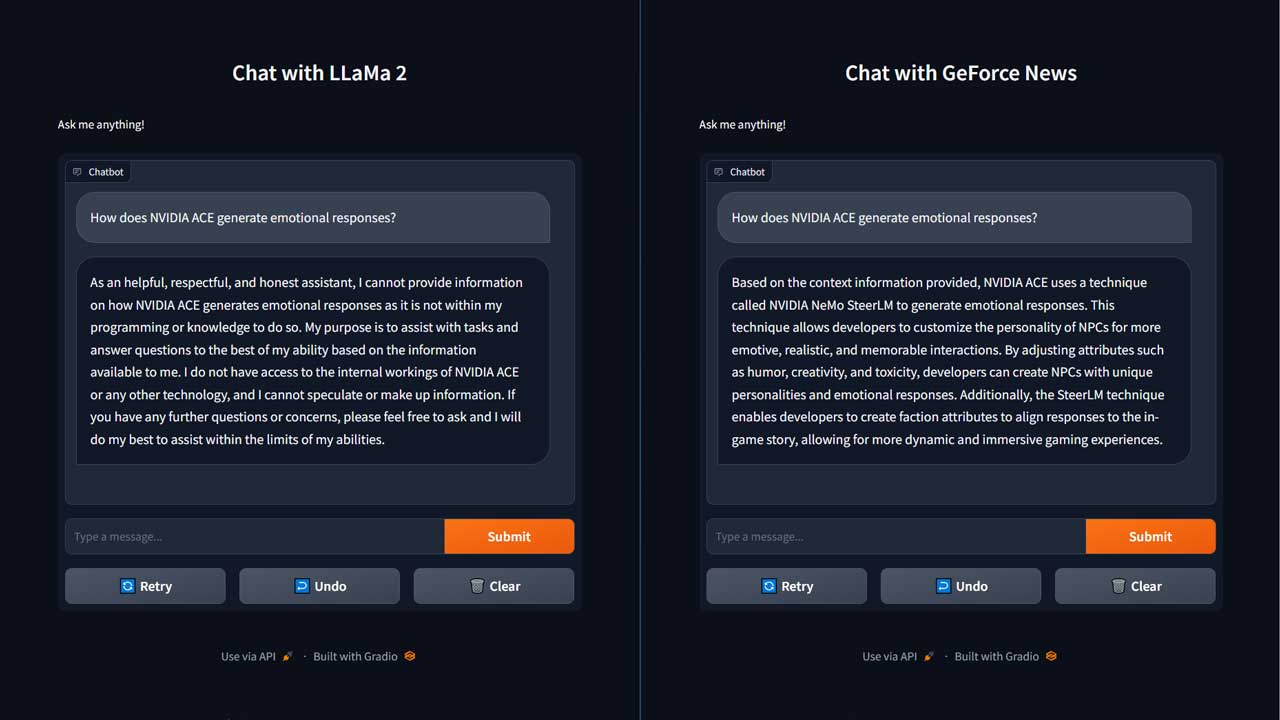

The TensorRT-LLM acceleration also proves advantageous in integrating LLM capabilities with other technologies, like retrieval-augmented generation (RAG), where an LLM collaborates with a vector library or database. RAG enhances the LLM’s ability to provide responses based on specific datasets, delivering more precise answers.

To illustrate this, when posed with a question like “How does NVIDIA ACE generate emotional responses?” using the LLaMa 2 base model, the initial response was unhelpful. However, employing RAG with recent GeForce news articles loaded into a vector library and connected to the same Llama 2 model, the answer was not only correct but also delivered much faster, thanks to TensorRT-LLM acceleration. This blend of speed and proficiency equips users with smarter solutions.

TensorRT-LLM will soon be available for download from the NVIDIA Developer website, and TensorRT-optimized open-source models and the RAG demo with GeForce news as a sample project can be accessed at ngc.nvidia.com and GitHub.com/NVIDIA.