As the tech world debates the safety and cost of autonomous AI, NVIDIA has stepped in with a hands-on guide for those who want to keep their agents local. The company recently published a detailed walkthrough for deploying OpenClaw, which is one of the latest complex AI frameworks that you could run on your own silicon rather than relying on expensive, privacy-hungry cloud APIs.

The Rise of the Local Agent

OpenClaw (formerly known as Clawdbot) has gained traction for its ability to act as a persistent personal secretary. Unlike standard chatbots, it can stay active 24/7, digging through your local files, managing your calendar, and drafting emails autonomously.

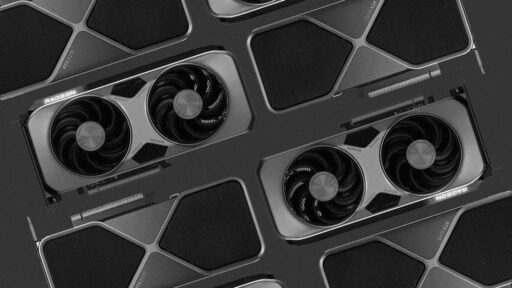

By shifting the workload to RTX Tensor Cores, you can run these models for free while ensuring sensitive data never leaves the machine.

RTX vs. DGX Spark

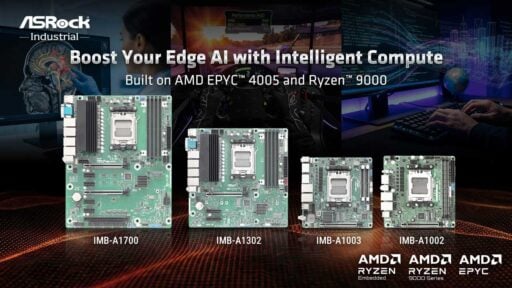

The OpenClaw guide offers two paths for deployment via Windows Subsystem for Linux:

- GeForce RTX GPUs: Leveraging CUDA acceleration and the latest Blackwell architecture (found in the 50-series), these cards provide the speed needed for quick response times in a desktop environment.

- DGX Spark: For those needing serious “headroom,” NVIDIA pushes the DGX Spark. This deskside supercomputer features 128 GB of unified memory, allowing it to run significantly larger models (up to 200 billion parameters) that would typically choke a consumer GPU.

A High-Stakes Moment for OpenClaw

NVIDIA’s guide arrives at a chaotic time for the OpenClaw project. Just days ago, OpenAI made waves by hiring the project’s founder, Peter Steinberger, to lead their personal agent division. Almost simultaneously, Meta and several other major firms reportedly banned the tool from their internal networks, citing security concerns over how autonomous agents interact with corporate data.

NVIDIA addresses these risks head-on in the guide, recommending that users run the software in Virtual Machines (VMs) or on clean, dedicated PCs to mitigate potential security leaks.

| Feature | Benefit |

| Privacy | Your files and chat history stay on local storage. |

| Zero Cost | No per-token fees for always-on background tasks. |

| Scaling | Use DGX Spark for massive models or RTX for daily speed. |

| WSL Support | Optimized for Windows Subsystem for Linux for stability. |

Note: NVIDIA suggests starting with a “clean” environment. Don’t give an autonomous agent full access to your primary accounts until you’ve vetted the specific “skills” you’re enabling.