NVIDIA’s GPU Technology Conference (GTC) 2026 shifted the industry’s gaze from simple pixel upscaling to a fundamental transformation of how game assets are stored and rendered. While DLSS 5 captured headlines with its ambitious neural rendering model, the official release of the RTXNTC (Neural Texture Compression) SDK on GitHub might be the more significant breakthrough for the broader gaming community.

By reimagining textures as learned neural representations rather than static data blocks, NTC addresses the industry’s most persistent hardware bottleneck: Video RAM.

Table of Contents:

Solving the VRAM Crisis

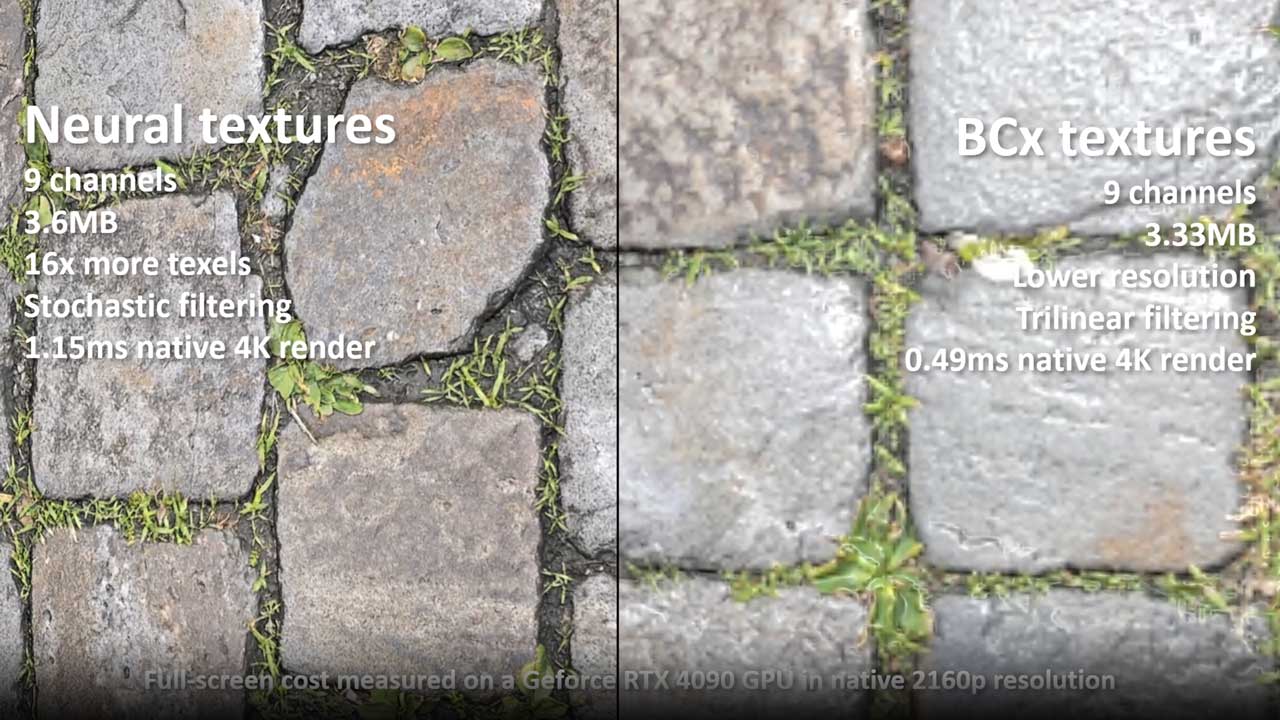

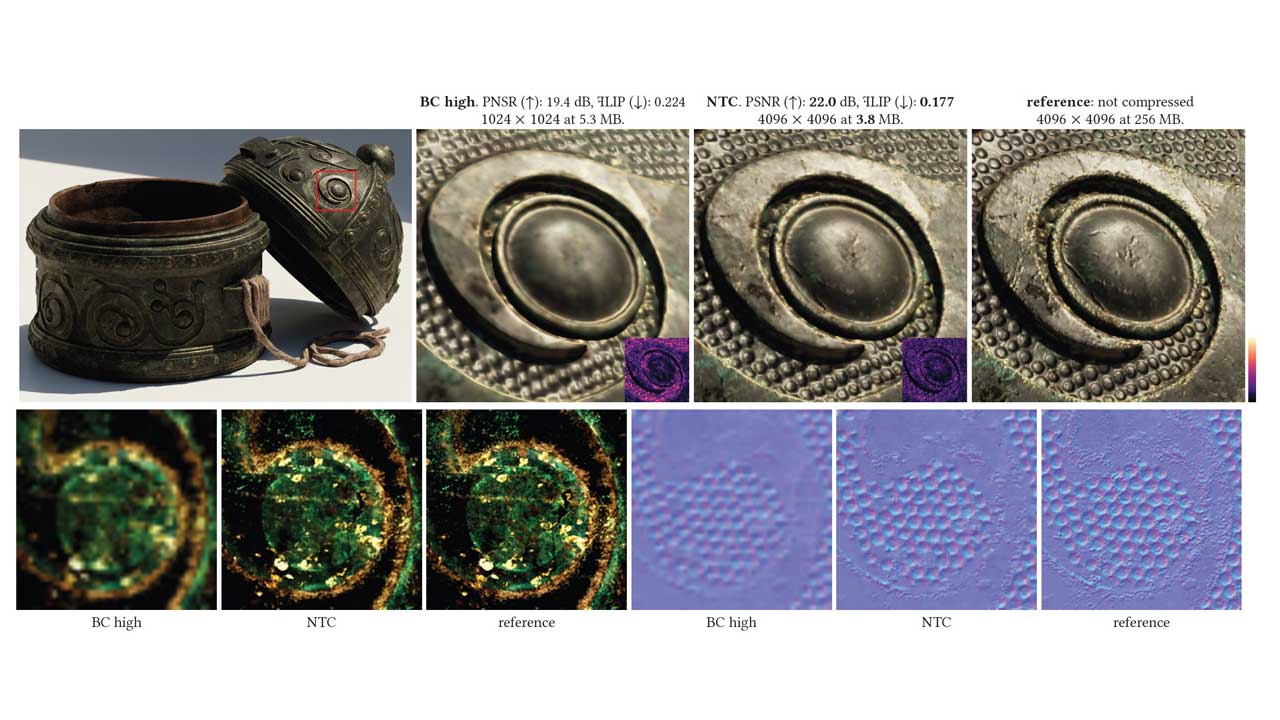

For decades, game developers have relied on block-based compression (BCn) to manage textures. While reliable, this method is increasingly inefficient for 4K and 8K assets, leading to the massive VRAM requirements that often choke mid-range GPUs. NTC replaces these rigid blocks with small, highly optimized neural networks. During the GTC keynote, NVIDIA demonstrated this in a Tuscan Villa scene where VRAM usage plummeted from 6.5 GB using standard BC7 compression to just 970 MB with NTC. This 85% reduction suggests a future where 8 GB or 12 GB cards can handle ultra-fidelity textures that would previously have required professional-grade hardware.

Foundational Efficiency vs. Generative Luxury

The comparison between NTC and DLSS 5 highlights a choice between foundational efficiency and top-end luxury. DLSS 5 is a generative powerhouse designed to “infuse” pixels with photoreal lighting — a process so computationally demanding that NVIDIA’s GTC 2026 demo required two RTX 5090s: one to render the game and a second dedicated entirely to running the DLSS 5 model. This was an engineering workaround for a pre-release technology, not a consumer requirement; NVIDIA has confirmed DLSS 5 will run on a single RTX 50-series GPU when it ships. In contrast, NTC is a “bottom-up” technology. It doesn’t just make the final image look better; it frees up massive amounts of memory and bandwidth that the GPU can then use for better physics, more complex geometry, or higher frame rates. It is a utility that improves every aspect of the rendering pipeline.

Accessibility Across Hardware Generations

One of the most compelling arguments for NTC is its versatility. According to the SDK documentation, NTC offers three distinct modes: Inference on Sample, Inference on Load, and Inference on Feedback. While the “Inference on Sample” mode provides the most dramatic VRAM savings by decompressing textures in real-time via Tensor Cores, the “Inference on Load” mode broadens accessibility significantly.

This mode decompresses NTC textures during the loading screen and transcodes them into traditional BCn formats, delivering zero runtime performance cost while dramatically reducing install sizes — and crucially, it works not just on older NVIDIA cards but across AMD and Intel GPUs as well.

Shrinking the 200 GB Game

Beyond runtime performance, NTC tackles the growing crisis of “bloatware” in modern gaming. Because the neural representation of a texture is significantly smaller than the source data, developers can ship much higher-resolution assets while shrinking the total disk space required for a game. For a player base tired of 200 GB downloads and the need for constant SSD upgrades, this storage-to-VRAM compression pipeline offers a far more practical day-to-day benefit than the high-overhead generative features of DLSS 5. By making high-fidelity assets “cheaper” to store and move, NTC effectively extends the lifespan of the current gaming ecosystem.